What's The Problem?

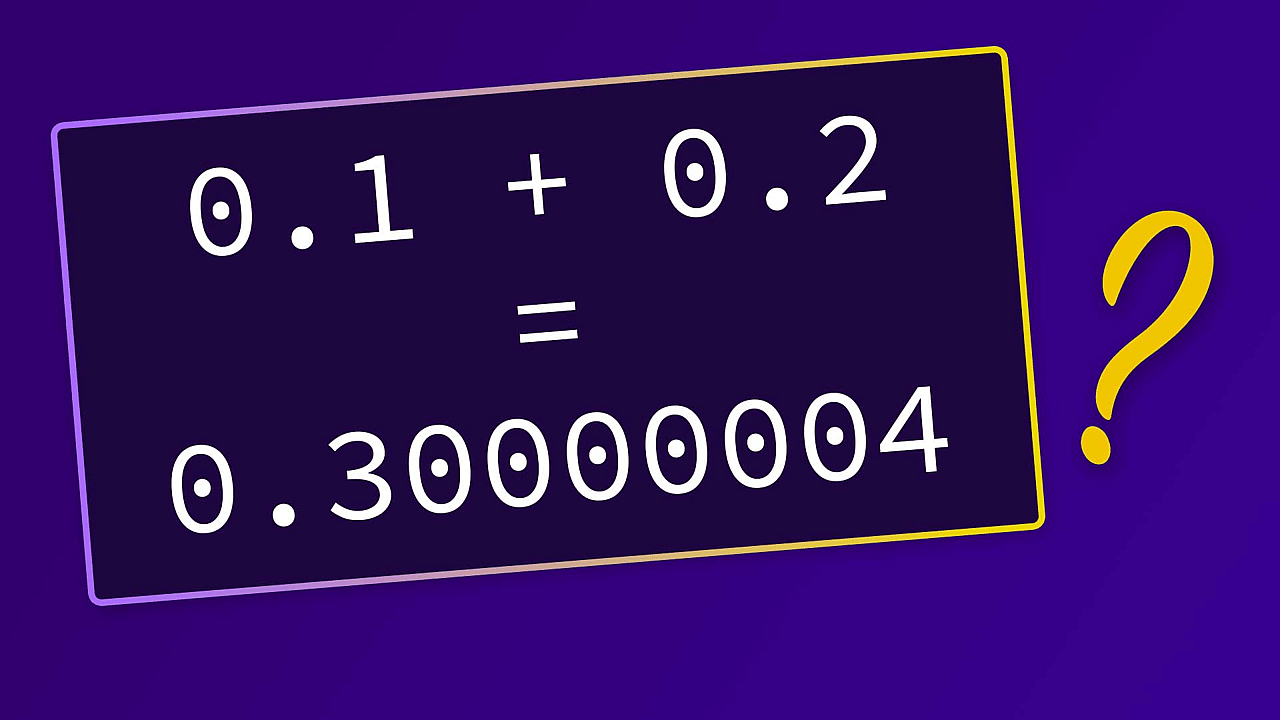

Here's an example from JavaScript - though similar examples can be found for basically all programming languages:

const result = 0.1 + 0.2;

console.log(result); // prints 0.30000000000000004What's that? Where is that 4 at the end coming from? 0.1 + 0.2 should just yield 0.3, right?

Computers have their problems with some fractional numbers - and this article explores why that's the case and what you should keep in mind.

Computers Use The Binary Numeral System Internally

The root of the problem is that computers are using the binary numeral system under the hood. Understanding how it works and how you may convert numbers from the decimal numeral system to the binary system will help a lot. I got another article on that - definitely consider going through that article first!

In short, when working with the binary numeral system, there are only two numbers you can work with: 0 and 1. All other numbers have to be expressed with these two numbers.

It's actually the same in the decimal system - there you have the numbers 0 to 9 to work with and all other numbers have to be expressed with these numbers (e.g. 19 is 1 and 9 combined). But we're more used to the decimal system, which is why we typically have no problems with that.

But back to the binary numeral system. Why is it causing the strange error mentioned above?

Some Numbers Can't Be Expressed Exactly

In the example above (0.1 + 0.2), the problem is that both 0.1 and 0.2 can't be processed and stored exactly by the computer. Because whilst they are easy to process and express in the decimal system, that's not the case for the binary system.

To understand the problem, let's take a look at a number that's impossible to express and store exactly in the decimal numeral system: 1/3.

1/3 is a valid number but not a number that we can express exactly. Instead, it's 0.33333333... and we could continue adding 3s at the end until the end of time.

Now consider the case that you want to add 1/3 + 1/3 + 1/3. As a human, we know, that this equals 1.

But if we would do the math with 3 * 0.33333..., we could come to the conclusion that the result should actually be 0.9999999999....

Of course, as a human, we know better. We know the concept of fractional numbers and we know that 3 * 1/3 = 1.

But computers don't know that - and that is the problem!

Let's switch to the binary system - because that's the numeral system with which computers work internally.

0.1 is 1/10 in the decimal system (and that's of course no problem - we can express this number exactly).

But the binary system equivalent to 0.1 is actually 0.00011001100110011..., where the pattern 0011 is actually also repeating infinitely.

In the decimal system, numbers < 1 are expressed by dividing through 10 (0.2 is 2/10, 0.8 is 8/10 etc.).

In the binary system, it's the same but division has to be done with 2 instead of 10. Hence 0.1 is actually expressed as the sum of multiple fractional numbers, where every fraction divides by 2 or 2^x:

0.1 = 0/2⁰ + 0/2¹ + 0/2² + 0/2³ + 1/2⁴ + 1/2⁵ + 1/2⁸ + ...Since we can only divide by 2 at the power of x, there is no way of hitting exactly 0.1. We can only get closer and closer. Just as we can get closer and closer to the result of 1/3 in the decimal system.

The above series of fractions can also be re-written like this:

0.1 = 0 * 2⁰ + 0 * 2⁻¹ + 0 * 2⁻² + 0 * 2⁻³ + 1 * 2⁻⁴ + 1 * 2⁻⁵ + 0 * 2⁻⁶ + 0 * 2⁻⁷ + 1 * 2⁻⁸ + ...As you learned in the other article, this is how you can convert decimal to binary numbers. You can now take the multipliers (the 1s and 0s) and concatenate them to the binary number:

0.1 = 0.00011001...So numbers that can be expressed exactly in one numeral system can't necessarily also be expressed exactly in other numeral systems.

How Are Computers Dealing With That?

Computers and programming languages are converting numbers all the time. And to process and store values like 0.1 (the decimal system number) correctly, they round the binary values at some point.

So the binary number 0.00011001100110011... gets rounded to a value like 0.0001100110011001 (this is just an example!). The number of decimal places that are expressed exactly (i.e. that are not rounded) depends on the precision with which the number should be stored and on the underlying system. In a 32-bit system, 23 digits after the decimal point can be stored.

Precision defines how many bits will be occupied in memory to store the number. The more bits are made available, the more digits after the decimal point can be stored before rounding occurs.

You might've heard terms like single precision or double precision when defining variables in certain programming languages. That's what they mean - how many numbers (after the decimal point) are stored exactly before rounding occurs.

Note: It's worth noting that numbers aren't actually stored as

0.000110011...in memory. Instead, they are basically stored in scientific notation - you can learn more here. So the precision is not the number of digits after the decimal point! But it still does influence how many digits can be expressed before rounding becomes active.

In calculations like 0.1 + 0.2, both 0.1 and 0.2 can't be expressed exactly in the binary system, therefore rounding errors can influence the result - that's why results like 0.30000000000000004 might be derived and displayed.

How To Deal With Inexact Values

There are various things to consider when working with fractional numbers - and various strategies for working around problems.

Don't Compare For Equality

You should try to avoid comparing for (in-)equality like this:

const result == 0.1 + 0.2;

console.log(result === 0.3); // falseDepending on the language where you're doing this, you might not always get true as a result - because, as shown above, 0.1 + 0.2 could be stored internally as 0.30000000000000004 (which is not equal to just 0.3).

So rounding errors can and often will influence comparisons like this.

Instead of checking for (in-)equality, it's therefore preferable to use operators like < and > for comparisons.

Reduce The Number Of Decimal Places When Outputting Values

When showing results of calculations like 0.1 + 0.2 to end users, you might now want to rely on exactness. For example, when working with JavaScript, your users could be facing ugly and confusing results like 0.30000000000000004 when visiting your website.

A good strategy therefore is to manually "cut the number off" after a certain amount of digits after the decimal point.

For example, in JavaScript, you can do that like this:

const result = 0.1 + 0.2;

const formattedResult = result.toFixed(2); // toFixed(x) returns a string with x numbers after the decimal point

console.log(formattedResult); // prints 0.30